Approved Networks AOC & DAC Application Notes

Posted by Frank Yang on Dec 13, 2021

Approved Networks, a brand of Legrand, provides a broad range of direct attach copper cables (DACs) and active optical cables (AOCs). This application note illustrates the use cases in the data center, wiring closet and network engineering lab.

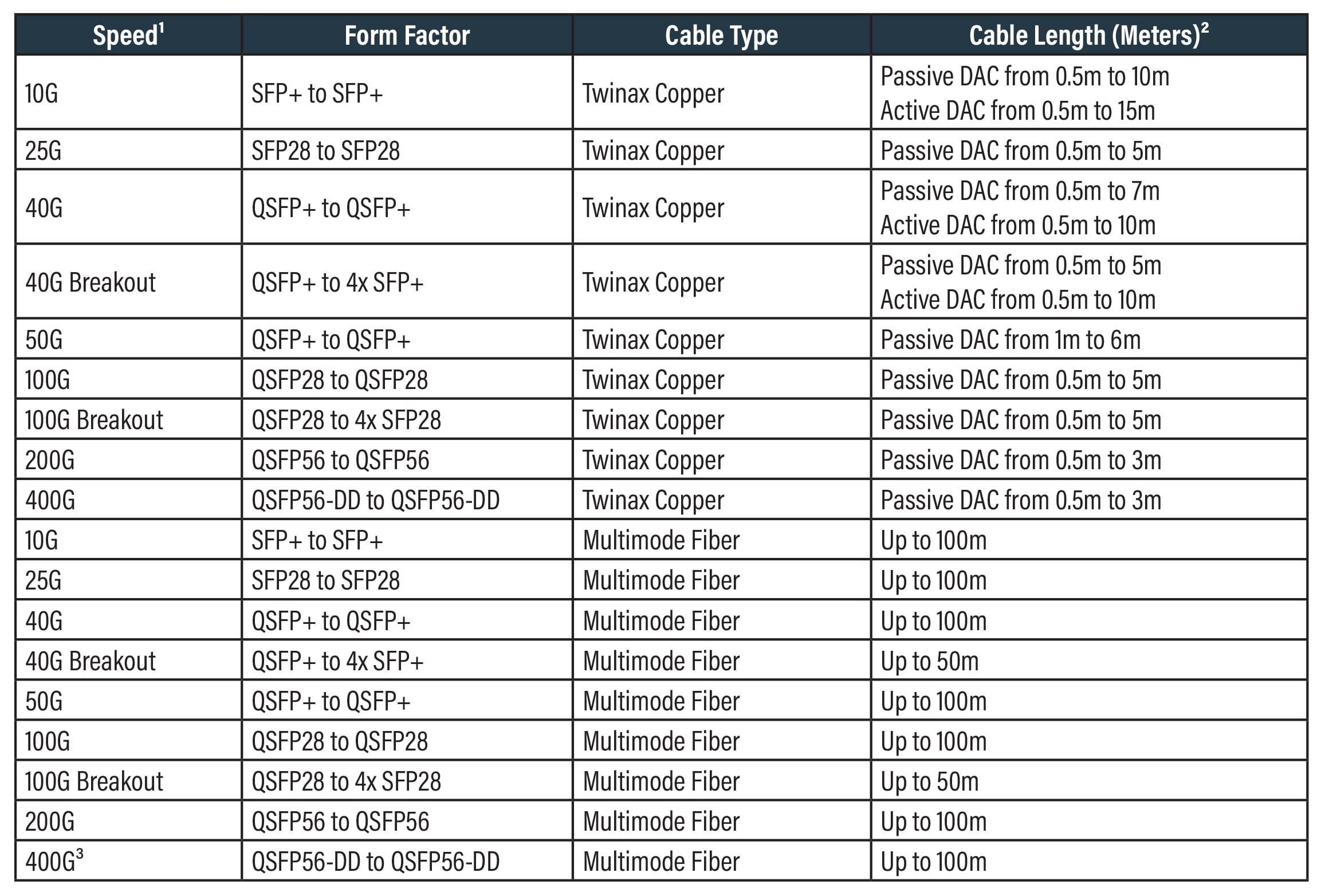

Portfolio Overview

Note:

- Product type and configuration subject to change. Please consult your sales representative for product updates.

- AOCs are available in lengths up to 100m but deploying cables longer than 30m is not recommended.

- 400G breakout cables are in a road map for later release.

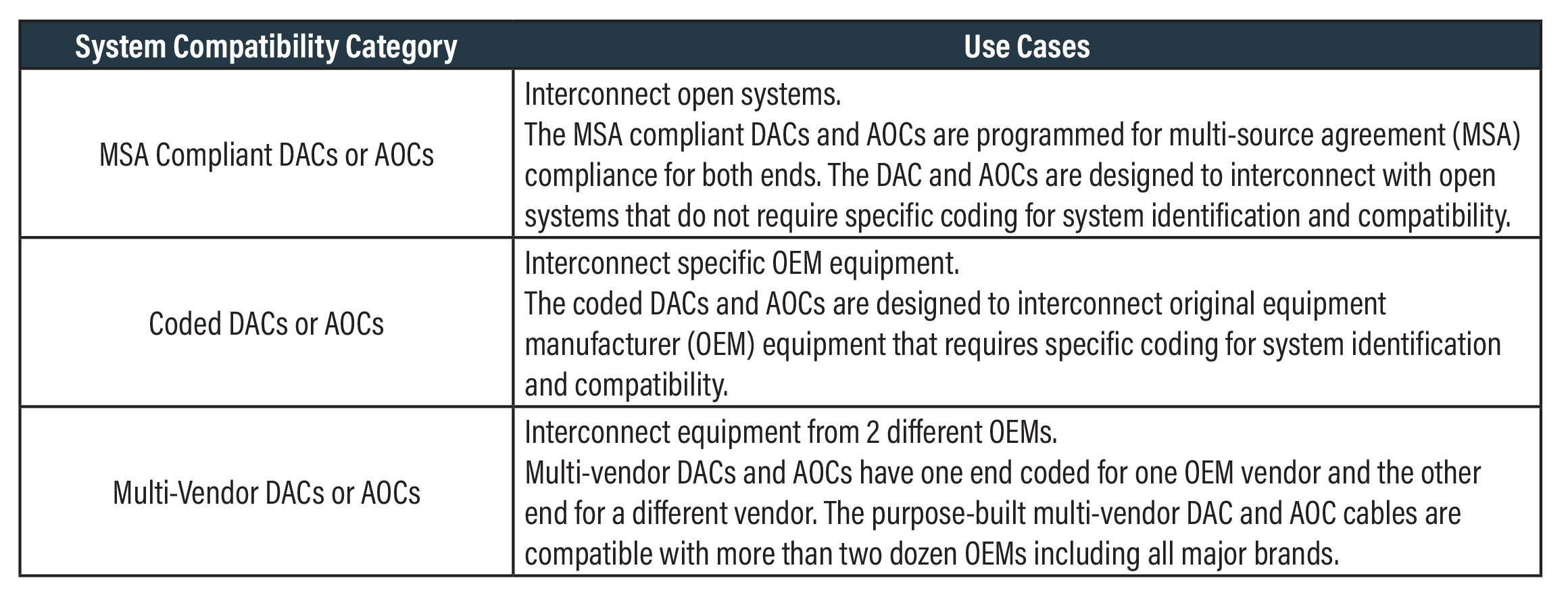

Approved Networks’ DACs and AOCs are categorized in three groups based on compatibility.

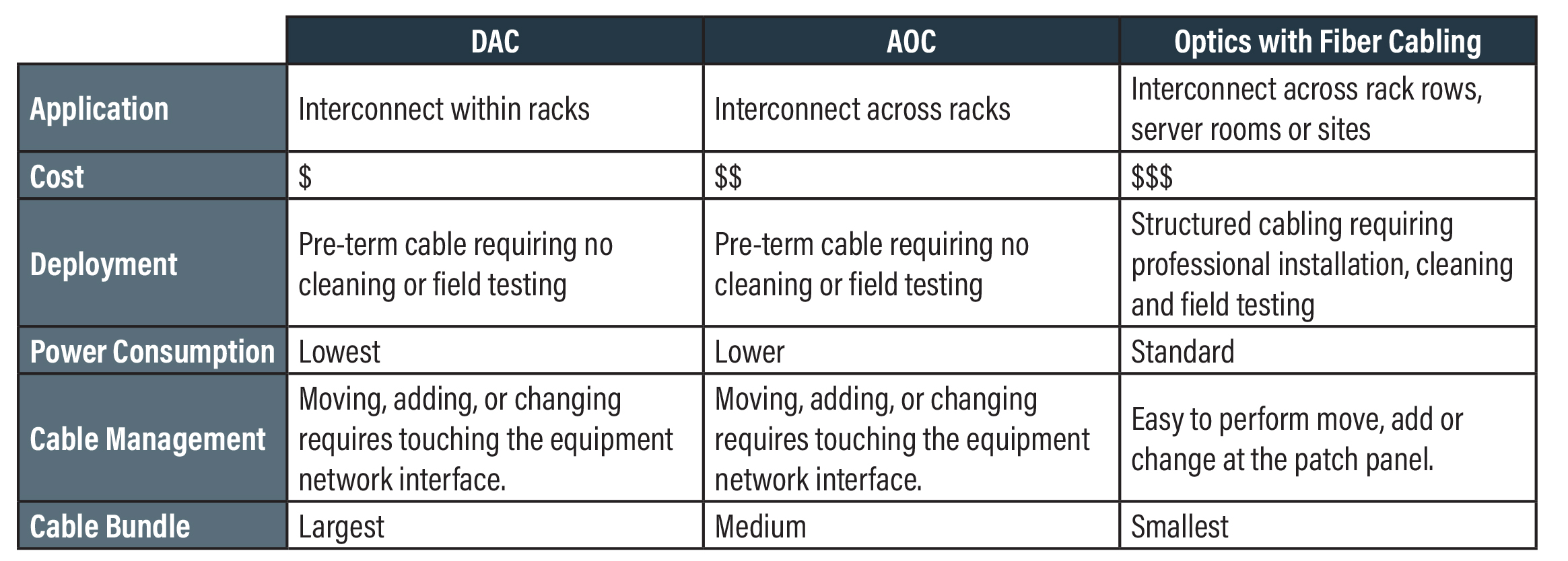

The following table compares Approved Networks DACs and AOCs with the traditional approach of using optics plus fiber cablings.

Key Benefits:

- Guaranteed Compatibility. Our DACs and AOCs are programmed by experts and 100% in-system tested at our robust California lab, which features virtually all major OEM switches and server cards.

- Customized Solutions. Our DACs and AOCs can be fully tailored to fit your specific needs, including custom multi-vendor programming, lengths, cable colors, and labeling.

- Availability and Fast Shipping. We provide quick-turn solutions for small purchase needs and/or evaluations and the ability to ship large quantity orders in under 3 weeks on select form factors.

- 100% TAA Compliant. Our DACs and AOCs are coded, tested, labeled, and packaged in California.

- Single Source. Single source for optics, DACs, AOCs and other connectivity solutions.

DACs and AOCs in the Data Center

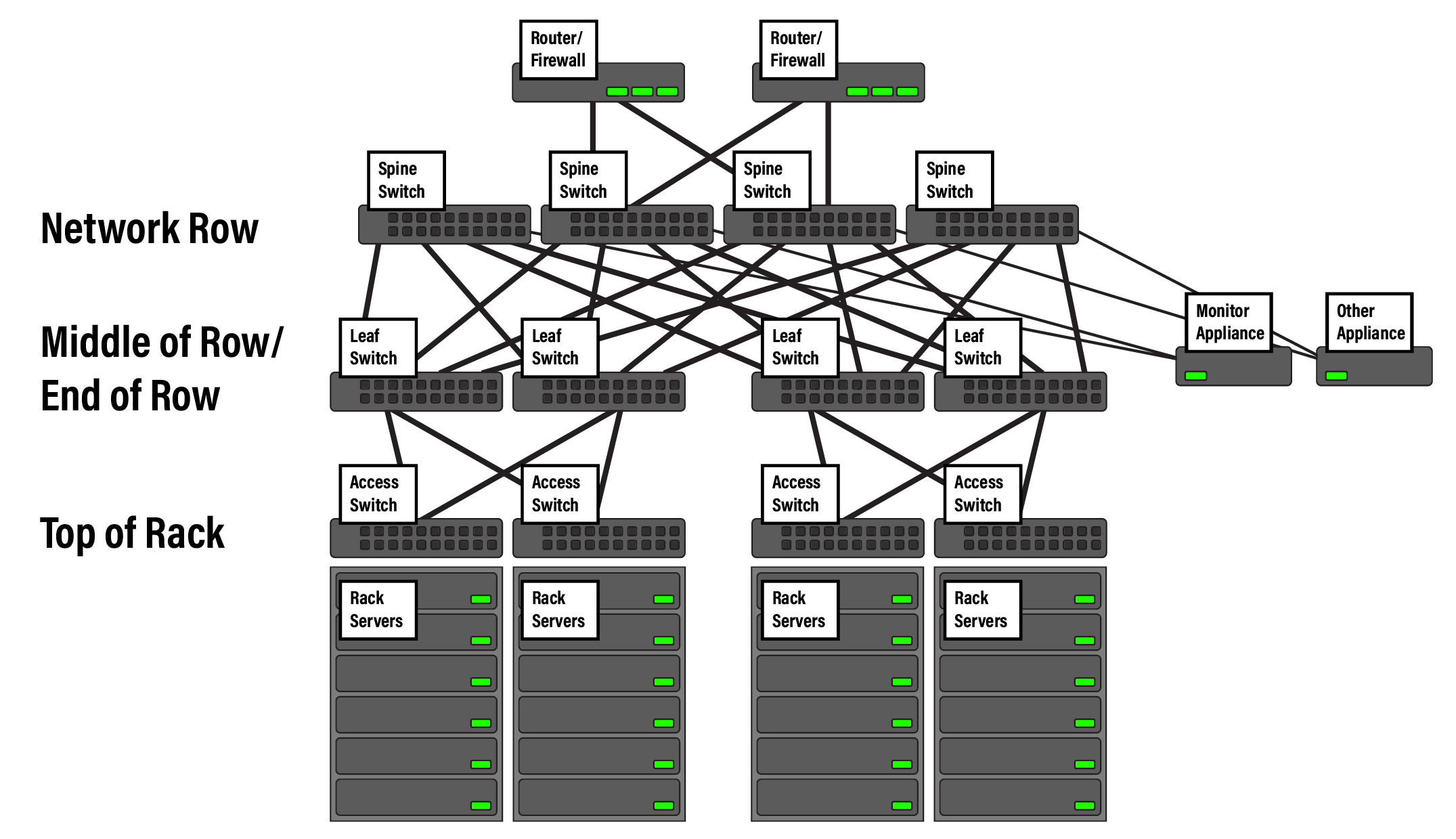

Figure 1 below illustrates a typical spine-leaf fabric architecture, which has been widely adopted by the modern data center to support cloud applications. This architecture controls the day 1 build costs, while also allowing very fast scalability to meet today’s ever-expanding data needs.

Access Layer

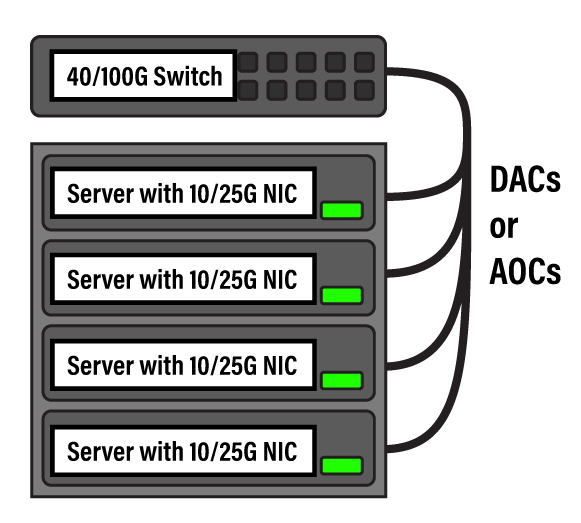

As illustrated in Figure 1, the server access switches are often located at the top of rack (ToR). Approved Networks DACs are ideal for connecting ToR access switches to rack-mounted servers. Whether you have a 10G connected server in an enterprise data center, a 25G server in a hyperscale/cloud provider data center, or a 40/100G server for high-performance computing applications, DAC and/or breakout cabling can provide the most robust, cost-effective connectivity to these servers at any required data rate.

In high-density deployments at the server access layer, Approved Networks breakout DACs are ideal for connecting a ToR switch to servers. For example, using a 40G QSFP+ to 4x 10G SFP+ breakout DAC to connect a 40G port of the ToR switch to four 10G servers lowers the cost and complexity of these connections. Similarly, using a 100G QSFP28 to 4x 25G SFP28 breakout DAC to connect a 100G port to four 25G servers will do the same for 100G to 25G connections. 400G breakout connections will be available for similar applications.

AOC breakouts can be used in the same high-density deployment for longer spans, e.g., between Middle-of-Row to the Network Row, or any other connection longer than 7m.

Fabric

The leaf switch is often deployed in middle-of-row (MoR) or end-of-row (EoR) positions. The reach between the ToR switch and MoR/EoR switch typically exceeds 7m but is often shorter than 30m. Approved Networks’ AOCs are ideal to interconnect the ToR switch with the MoR/EoR switch at these distances. Although AOCs are available up 100m long, the best practice is to deploy AOCs 30m or shorter, because of the challenge of pulling AOCs with bulk ends.

The fabric from the spine to leaf typically requires high-performance, high-bandwidth connectivity. In this use case, Approved Networks’ 100G and 400G AOCs are ideal for building spine-leaf fabric connectivity.

Core routers, security appliances, monitor appliances, and similar devices are typically connected to either the spine or leaf switch, depending on the design. In this case as well, Approved Networks’ AOCs or DACs are the ideal connectivity solution to interconnect these devices to the spine or leaf switch, depending on placement and reach.

Multi-vendor environment

The data center network is typically a multi-vendor environment. For example, a data center may include core routers made by Cisco or Juniper, spine switches by Arista, leaf or ToR switches by Juniper, servers with Intel/Broadcom/ Mellanox network interface cards (NICs) installed, a firewall by Palo Alto, and monitor appliances by Gigamon or Netscout. The challenge arises when equipment from different OEMs must be connected, but at least one of the OEMs requires specific coding to ensure proper system identification.

For these environments, Approved Networks provides multi-vendor DACs and AOCs with one end coded for one vendor and the other end for a different vendor. These purpose-built multi-vendor DACs and AOCs can be compatible with over two dozen OEMs, and are available in a broad range of lengths, speeds, and form factors. All multi-vendor DACs and AOCs are 100% tested in-system at Approved Networks’ engineering lab to ensure compatibility and quality. Prior to shipping, the multi-vendor DACs and AOCs are labelled at each end for the intended OEM brands to reduce in-field operational confusion.

Approved Networks DACs and AOCs can also be used for connections of applicable lengths in other data center network architectures (e.g., the traditional multi-tier architecture).

DACs and AOCs in the Wiring Closet

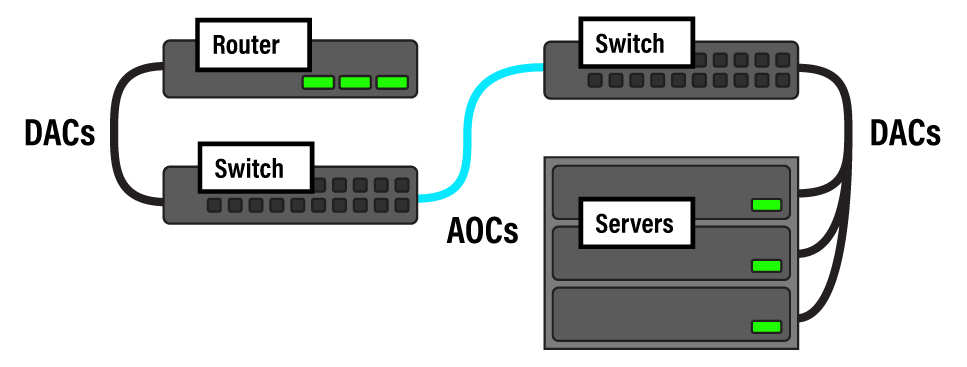

The wiring closet facilitates network access for desktop computers, laptops, and mobile devices in commercial buildings. Often, the wiring closet hosts servers for local access, but can also host routers such as a campus core router or a customer premise equipment (CPE) router for wide area network (WAN) access. In this environment, DACs and AOCs can be used to interconnect routers to switches, switches to switches, or switches to local servers wherever the cable length is applicable.

In this environment, it is commonly necessary to interconnect equipment made by different OEM vendors. For example, in order to deploy voice over IP phones in a commercial building, the networking design might require connections between an Adtran switch and an Aruba switch. As in our data center example, network engineers can choose a multi-vendor Approved Networks DAC or AOC coded for Adtran at one end and Aruba at the other end.

Note: the best practice is to avoid pulling AOCs across rooms or floors. The bulk ends of AOCs will not fit through conduits or similarly small spaces. For those applications, standard fiber riser cabling should be used.

DACs and AOCs in the Network Engineering Lab

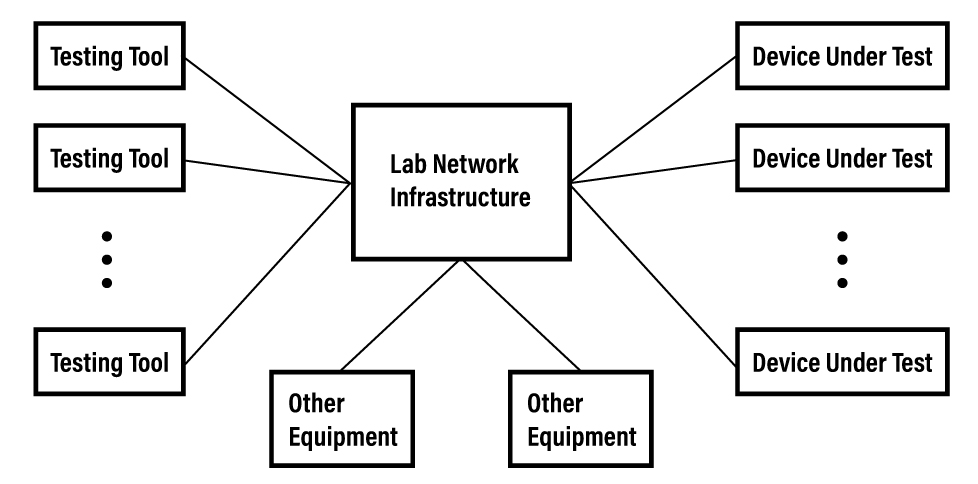

Another ideal –yet often overlooked– place to benefit from DACs and AOCs is the network engineering lab. Figure 4 is a high-level illustration of the network engineering lab environment.

Lab managers and network engineers often need to use network testing tools and other equipment on devices under test (DUTs). The tests may include functionality, interoperability, performance, and stress tests. If the tests do not require certain Layer 1 connectivity and the cable length is applicable, Approved Networks DACs and AOCs can be utilized to build the connectivity for the test beds.

For example, a service provider’s network engineering lab might need to test IP Multiprotocol Label Switching (IP/MPLS) functionalities over a 100 Gigabit Ethernet (100GE) link between a Cisco router and a Nokia router. This IP/MPLS interoperability test does not require specific Layer 1 connectivity products. As long as the Layer 2 link of 100GE is established, the tests can be performed. Network engineers can choose a Approved Networks 100G DAC or AOC with one end coded for Cisco and the other end for Nokia. This approach is much more cost-effective, and more reliable, than using optics plus fiber cabling.

Conclusion

Approved Networks provides a broad range of DACs and AOCs to support applications in the data center, wiring closet, network engineering lab, and more. Using Approved Networks DACs and AOCs means guaranteed compatibility, customized solutions, fast delivery, 100% TAA compliance, and cost-effectiveness.

For more information on ordering DACs and AOCs, please refer to the appendices on the following pages.